Data Center Infrastructure Management Software: The Modern Essential Features

As consumer demand and AI workloads have surged, data centers find themselves operating under unprecedented constraints. Distributed compute, higher rack densities, tighter thermal capacity margins, and accelerated deployment cycles strain every aspect of data center infrastructure management (DCIM). In response, DCIM software has expanded beyond documentation and monitoring solutions to fully operational platforms that support planning, change governance, and system integration across all facility and IT domains.

This article discusses the evolution of DCIM tools in this context and identifies three demand-driven shifts that have principally shaped modern DCIM as it is known today. From the perspective of these three vectors, this article derives a focused set of technical requirements to define a minimum baseline for the ideal DCIM software.

Summary of essential features of modern data center infrastructure management software

The table below summarizes the core elements of the baseline described above. The remainder of the article expands upon each feature, clarifies the respective technical requirement it addresses, and connects both the features and the requirements to the underlying shifts influencing DCIM evolution.

| Essential Feature | Technical Requirement Description |

|---|---|

| Visualization and digital twins | Maintain a customizable, consistent operational model across physical space, connectivity, and dependencies. |

| Asset and connectivity management | Preserve authoritative asset/connectivity records with extensibility and traceability. |

| Monitoring and environmental telemetry | Observe operational state, discover network devices, and reconcile measured vs. documented conditions. |

| Capacity planning and optimization | Quantify headroom and constraints to support safe allocation and growth. |

| Workflow, governance, and change control | Execute changes with auditability via multiple application views to reduce documentation drift. |

| Reporting and BI | Produce stakeholder-specific reporting without vendor dependency to support analytics integration. |

| Interoperability and adoption | Reduce migration friction and sustain integrations via connectors and robust APIs. |

netTerrain. Battle-tested & affordable DCIM Software

-

Manage data center floor plan, rackspace, cabling, power distribution, and more

-

Use analytics to optimize data center capacity and energy costs

-

Deploy quickly using asset discovery tools and pre-built integrations

The evolution of data center infrastructure management software tools

Early DCIM solutions typically targeted discrete technical problems and were vendor-aligned or on proprietary platforms. These tools offered a clear value proposition, with success tied to task- or subsystem-based scope, but implementation of these systems reinforced siloed operational data, tooling, and workflows.

In modern environments where facility infrastructure, IT systems, and monitoring stacks can freely exchange data continuously, interoperability is no longer an enhancement; it is a baseline expectation. As a result, this demand-driven evolution of DCIM has shifted the original value proposition from discrete problem-solving to the effective integration of an operational suite of DCIM tools.

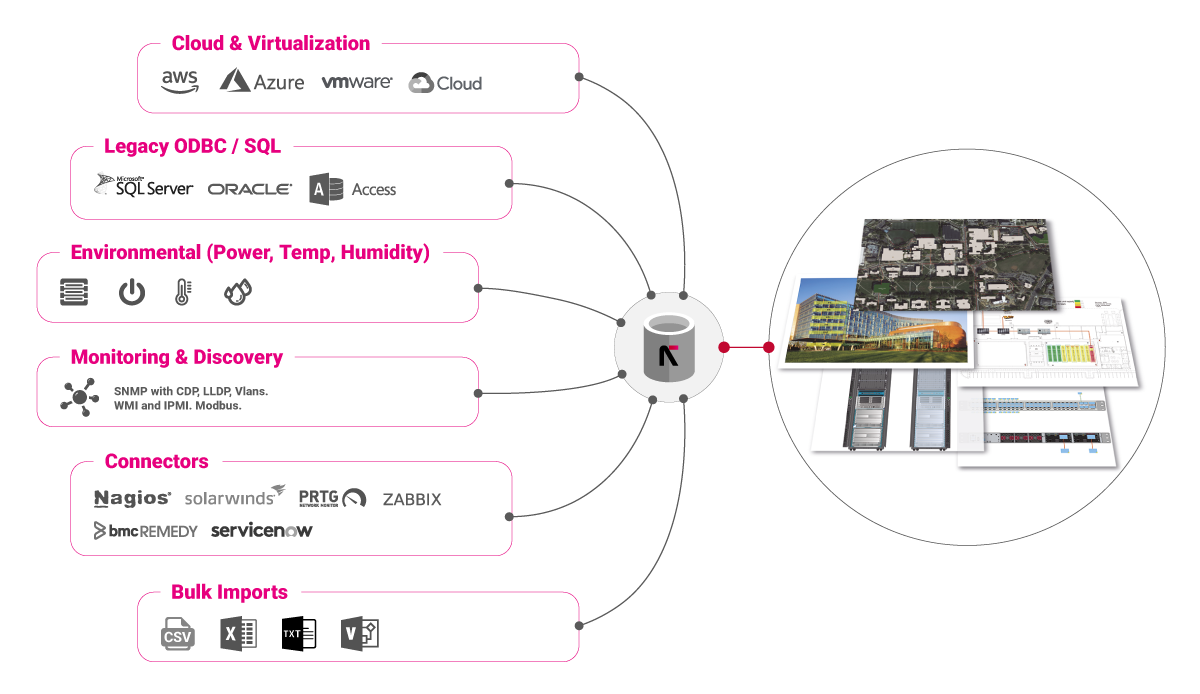

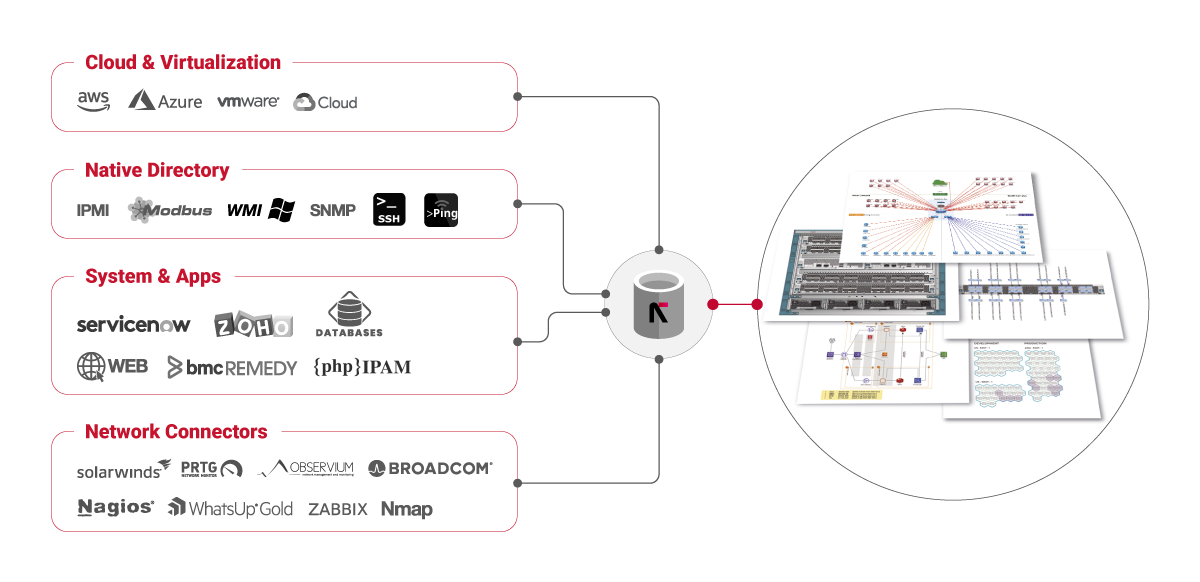

The image below represents a high-level view of a modern datacenter infrastructure management software platform. Unlike older, antiquated systems, the combination of virtualization, external integrations (connectors), environmental monitoring, legacy artifact integrations, and data imports are represented. This is arguably the most significant shift represented in modern DCIM software solutions.

Despite this change into all-in-one platforms, operational data largely remains structurally heterogeneous. Early DCIM solutions relied heavily on siloed tools, thereby being encumbered by disparate databases and data formats, but modern implementations still encounter similar issues. Even when instrumentation and APIs are mature (highly integrated), organizations maintain static snapshot artifacts (e.g., spreadsheets, diagrams, exports, and PDFs) to reconcile incongruent datasets, support audits, and provide low-friction handoffs. These artifacts persist because they are portable, familiar, and often faster than forcing alignment across multiple systems. In practice, this creates a recurring requirement for DCIM tools to support a navigable model without assuming that all authoritative data will be born inside the platform.

Another aspect of the evolution in modern datacenter infrastructure management software can be viewed through a facility and reliability lens. Here, the evolution of modern DCIM software is reflected in the persistent balance between expanding capabilities and operating within tightening constraints. As power density increases and operating costs rise, DCIM platforms are expected to support optimization under constraints. This requires a combination of monitoring, capacity modeling, and change execution, all linked to reduce risk while somehow ensuring sustainable growth. There is little tolerance for error between these combined efforts, and as constraints weigh more heavily on operations, the reliance on cross-functional analysis deepens.

Ultimately, across most active DCIM programs, especially where the strain of market forces and consumer needs have tempered operational and organizational postures, three demand-driven shifts consistently appear:

- From static artifacts toward integrated, navigable models, while recognizing that “snapshot” documentation remains operationally necessary

- From siloed tooling to interoperable platforms

- From primarily operations and maintenance (O&M) of current systems to optimizing under tightening constraints

Taken together, these shifts represent the overall evolution of DCIM software and set the benchmarks for what can be considered the ideal solution: built on a flexible framework of interoperability, with dynamic modelling and systems optimized for long-term operational sustainability. The subsections below provide a deeper exploration of these shifts, both in terms of the technical requirements and operational benefits associated with their implementation. Only through the support of these shifts can a DCIM software platform truly be considered the ideal solution.

Shift 1: Static artifacts to navigable models

The ideal DCIM should serve as a shared model that multiple disciplines use to interpret the operational environment. As infrastructure becomes denser and more interconnected, static documents remain useful but do not scale as a primary interface. The practical requirement is a navigable model that supports rapid understanding, easy iteration, and the opportunity for emergent interpretation of system data. Through implementation, the following benefits can be realized:

- Visualization depth increases: Floorplans, rack elevations, port-level context, topology and dependency views become baseline expectations. Siloed datasets can be merged to form overlapping data meshes across the environment interface, while not being constrained to specific numbers or sequences of data layers (parent-to-child relationships).

- Traceability becomes operational: End-to-end path traces become used routinely for fault analysis, incident response, system evaluation, and change execution windows. This includes hybrid connectivity models where inside and outside plant connections must be considered.

- Model usability expands: The interface supports cross-functional use (i.e., facilities, network, and IT) without requiring continuous translation between tool-specific abstractions. This means a shared protocol, database, or model library must be used to build hierarchically and inclusively across all systems.

This shift, primarily associated with the introduction of shared hardware APIs and communication protocols, directly elevates visualization, connectivity modeling, and structured documentation. Further, in order to capture the base requirements associated with this shift it is clear that modern systems must not only integrate complex data sources but also be able to actively discover and adapt to all network connected devices. This ties directly into the second shift proposed here of interoperability.

Shift 2: Siloed tooling to interoperability

The ideal DCIM should operate as an integration layer rather than a standalone application. Embedded device telemetry (SNMP and OEM APIs), mature monitoring stacks, ITSM workflows, and CMDB-like inventories define steady-state operations in most environments. DCIM platforms will be expected to participate in that ecosystem through connectors, APIs, and consistent data exchange patterns. The ideal realization of these aspects can lead to the following benefits:

- Both internal and external interfaces matter: DCIM must integrate between inter-network and intra-network traffic via monitoring, analyzing data, ITSM/CMDB, network systems, and custom data sources.

- Hybrid entities become routine: Physical infrastructure will remain the core for data sources, but modeling frequently extends to virtual/cloud-adjacent objects and logical dependencies. This offers the opportunity for relational and transient behavior analysis.

- Platform maturity trends toward consolidation: The most mature examples of this shift in the DCIM software market typically provide expansive connector libraries and are updated regularly. To this end, the platform should offer broad interoperability, supporting modern Web APIs for software integration and diverse industrial protocols for device communication. Additionally, template modeling breadth should be substantial enough to handle most potential objects.

Centered on the availability of disparate component data and largely driven from multiple hardware sources, the ideal DCIM software has a strong emphasis on interoperability, robust APIs, and adoption-friendly migration tooling.

Shift 3: Operations and maintenance to optimization under constraint

As the user demand and total complexity of a data center system grow, the capacity and risk constraints increasingly dictate operational outcomes. Power availability, cooling capacity, network throughput, redundancy assumptions, and shorter deployment cycles reduce timeline tolerances for documentation development, fault resolution, and schedule drift. As a result, modern DCIM software solutions are expected to support constraint-aware planning and optimization, not merely inventory:

- Capacity planning becomes continuous: Headroom and constraint analysis will be required at multiple levels (rack/row/room/distribution). This also occurs at the component level for power and thermal, and at the component port level for the network.

- Optimization becomes measurable: Utilization, density risk, and efficiency targets will directly influence deployment decisions.

- Sustainability reporting becomes integrated: Efficiency and environmental reporting increasingly sit adjacent to operational planning and compliance requirements.

This shift links monitoring telemetry to a more central step in typical operations. It also connects telemetry to predictive or planning models and makes reporting and workflow governance operationally relevant. A key example of this shift can be seen in the incorporation of technical requirements for virtual and cloud components: logical-to-physical mapping, high-frequency data polling, and programmable infrastructure to name a few. The most direct constraints these new technologies represent are centered on density and volatility, where system management is no longer only about fixed hardware but rather a fluid/hybrid compute fabric.

Essential features of DCIM software

Considering the evolutionary shifts described above, along with the key technical requirements and benefits associated with implementation, the essential features of the ideal DCIM software must reflect the needs of the modern user. Furthermore, these features need to be evaluated practically based on whether they improve operational fidelity across the full lifecycle: documenting what exists, observing what is true in production, planning changes safely, executing those changes with governance, and maintaining alignment through integrations and reporting.

Though not exhaustive, the following list of features aligns with our examination of evolutionary trends in DCIM software and provides a practical baseline for what should be considered essential to any modern solution.

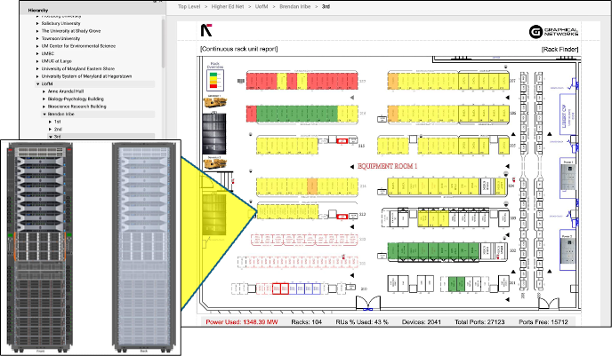

Visualization and digital twins

The first essential feature is a mature visualization layer, providing an operational interface into the underlying DCIM data model. Its primary function is to reduce time to understanding for cross-functional stakeholders by unifying spatial context (where things are) with relationship context (what they connect to and depend on). The image below represents a simple example of a floorplan view: this would be one layer of a complex visualization schema that ties physical assets to a digital interface.

In modern deployments, visualization is typically assessed as a capability set rather than a single view type. Requirements commonly include:

- Hierarchical navigation across physical domains (site → room → row → rack → device → card/port). These domains should not be rigid but instead highly customizable based on the users needs.

- Spatial representations (floorplans, rack elevations) tied to allocatable constraints and operational states. Various formats should be supported in terms of grid layouts, autocad 2D/3D views, and other graphical options.

- Relationship views for topology and dependency modeling that reflect both physical and logical connectivity

- End-to-end tracing to support impact analysis during change windows and incident response. This should be able to accommodate tracing of data lines, power lines, and also provide recommended circuit design features for system reliability.

The differentiator is often model flexibility. The platform must represent real-world variations in architecture and documentation standards without forcing teams into brittle conventions that slow adoption.

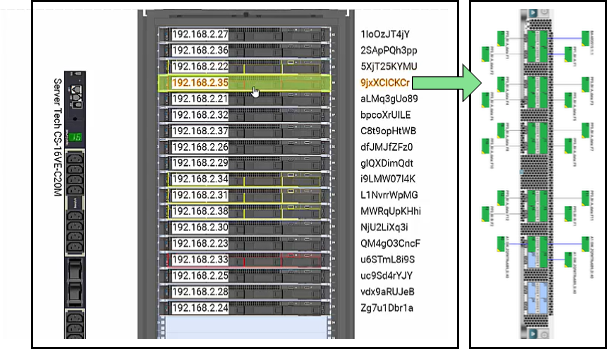

Asset and connectivity management

Asset management defines the system of record for infrastructure objects, identifiers, and lifecycle state. Connectivity management extends that record into operational truth by documenting the physical plant and interconnects that govern service dependency and failure impact.

A mature implementation is characterized by completeness at the rack/port layer and by extensibility that supports heterogeneity without continuous vendor involvement. Common requirements include:

- Device and port modeling: Racks, devices, cards, ports, and service-relevant attributes (serial/part numbers, tags, owners, states). Users should not be constrained on the type of device being modeled, but instead have the freedom to incorporate any object-property combination needed.

- Catalog extensibility without coding: The ability to extend device libraries, properties, and behaviors through configuration rather than custom development

- Labeling and naming standards: Rack/row conventions, port naming, cable labels, circuit identifiers, and consistent cross-referencing. The ideal DCIM solution should be able to not only set these standards but also help users realize them in real-world applications.

- Cable, circuit, and fiber management: Patching records, strand-level tracking where required, data center cable management, and circuit path documentation

- Trace-ready connectivity: Connectivity records structured specifically to enable reliable end-to-end path analysis

The image above demonstrates the relationship between asset definition in a virtual visualization along with the representation of key asset connections. Connectivity accuracy is frequently the primary driver of operational trust, and without high confidence in the relationship between these two views, operations suffer. When tracing and labeling are reliable, incident response is faster, and change risk decreases materially.

Monitoring and environmental telemetry

Environmental monitoring remains fundamental to DCIM. Modern expectations expand the telemetry surface area to include facility sensors, power infrastructure signals, embedded device telemetry, and network-facing health data. The technical requirement is not only the collection but alignment between the measured state and the modeled state.

In practice, monitoring capability is evaluated through both coverage and reconciliation. Common requirements include:

- Environmental telemetry: Power, temperature, humidity, leak detection and alarm states, and other facility infrastructure measurements where applicable

- Embedded OEM telemetry: Incorporation of protocols such as SNMP, Modbus, BACnet and/or vendor APIs for device status, power draw, alarms, interface state, and health indicators.

- Event and alarm workflows: Actionable alarms with context that links back to assets, locations, and dependencies

- Discovery and reconciliation: Mechanisms to identify assets or attributes via the network and reconcile the observed state with the documented state

Telemetry becomes materially more valuable when it feeds other features. It informs capacity models, triggers governed workflows, and improves data quality through continuous verification.

Capacity planning and optimization

Capacity planning quantifies headroom and constraints in a form that supports decision-making. In higher-density environments, capacity is not a single number—it is a layered model spanning data center power distribution, thermal limits, redundancy assumptions, and growth scenarios.

Effective DCIM implementations provide constraint-aware analysis at multiple aggregation levels. They support planning decisions by making tradeoffs explicit: Current capacity is reliably tracked, constraints are binding, and operational risks are detailed for any further allocation.

Sustainability and efficiency often belong in this feature because density and utilization decisions increasingly intersect with cost, utilization targets, and compliance-driven reporting requirements. Further, the ability to properly visualize these data points – via the features described previously – such that they can be both machine readable and human legible is essential for identifying these intersection points and ultimately driving action.

Workflow, governance, and change control

Governed workflow turns DCIM into an operational system rather than a reference system. Work orders, approvals, and audit trails formalize change execution and reduce the likelihood of documentation lagging behind physical reality.

In mature environments, change control supports repeatability: standardized task sequences, technician workflows aligned to physical constraints, and evidence capture that makes post-change validation possible. Even when an organization runs an ITSM platform, DCIM workflow features remain relevant because they bind change processes to the physical layer—space, power, connectivity, and access controls.

Governance also serves data integrity. When updates are part of execution, documentation drift decreases, and the model remains trustworthy under stress. The ideal DCIM software naturally pushes for this outcome but also maintains the freedom to incorporate existing workflows, integrations, or tools that are already part of the user’s governance process.

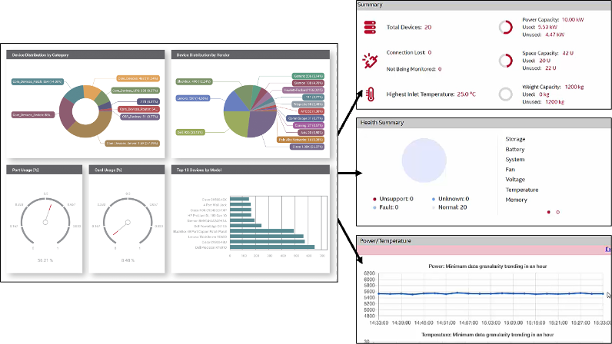

Reporting and BI

Reporting is the mechanism that delivers DCIM value to different stakeholders without forcing them into the same interface or abstraction level. Engineering stakeholders typically require trend analysis and performance context, operations require lifecycle and maintenance scheduling, and compliance requires auditability and standardized outputs. The image below shows an example of a standard monitoring and reporting mechanism, utilizing a frontend dashboard with detailed data collation capabilities stored for subsequent or real-time review.

A key maturity marker for data center infrastructure management software is self-service reporting. As shown in the image above, the expectation should not only be the visualization of operational metrics but also the availability of key details that can be filtered or selected based on user needs. If new reports require vendor intervention, the reporting function does not scale with organizational needs.

Modern platforms either provide built-in BI functionality or integrate cleanly into external analytics systems. In many environments, integrating DCIM data into broader dashboards—such as those built with open source tools like Grafana—adds value, as long as the platform exposes consistent, well-documented APIs and data profiles.

Interoperability and adoption

The decision to implement a new DCIM system is frequently based on the real or perceived friction associated with adoption. Most organizations begin DCIM implementation with existing artifacts and systems: spreadsheets, CSV exports, Visio diagrams, legacy tools, or data residing in databases. While the end-state operability is a key aspect of the ideal DCIM solution, mechanisms that can ease the translation of existing artifacts into a new system are just as important. Therefore, bulk import and migration are a first-order requirement, not a secondary convenience.

The image below represents a typical integration strategy associated with mature interoperability and easy artifact importing. An ideal DCIM software solution should make multi-baseline translation frictionless, allowing users to adopt new management software while also leveraging existing resources.

True realization of this essential feature also includes steady-state integration, which sustains the system’s relevance over time. This means that interoperability is not a one-time action but a long-term strategy at all stages of the operational lifecycle. Mature platforms typically support:

- Bulk import and migration tooling: Excel/CSV ingestion, mapping and validation, repeatable import pipelines, and options to import from databases or structured exports

- Integration patterns across three categories: Prebuilt connectors, customer-built connectors (ideally via a connector factory or low-code approach), and custom system/database integrations

- A robust, documented API: Coverage of operational actions performed through the UI, enabling automation and interoperability without fragile workarounds

- Interoperability in steady state: Synchronization or data exchange with monitoring, ITSM/CMDB, network systems, and bespoke enterprise data sources.

Among the essential features represented here, the aspects of interoperability and frictionless adoption may be the most important. Even considered on its own, this feature can be a powerful gauge of overall DCIM performance, as well as highlight the long-term viability of the platform.

Ultimately, the goal of DCIM software should be to provide a bridge from flat documentation and heterogeneous starting points into a configurable, model-driven solution that teams can extend without disproportionate engineering overhead.

Infrastructure clarity for organizations across all industries

Conclusion

The essential capabilities described here represent a new baseline for modern DCIM: a navigable operational model, credible telemetry and reconciliation, constraint-aware capacity planning, governed change execution, flexible reporting, and interoperability that supports both adoption and long-term integration. Taking into account the recent evolution of datacenter infrastructure management software, highlighted by the three main shifts we’ve discussed, the proposed baseline suggests that user expectations should be aligned with these new technological benchmarks.

Within a rapidly changing infrastructure landscape, the ideal DCIM software is defined less by any single feature and more by its ability to remain accessible to users, flexible enough to model real environments, and mature enough to integrate into day-to-day operations. The ultimate objective is sustained operational fidelity: a system that supports safe growth, reduces avoidable risk, and improves decision-making across facility and IT domains. Though the question of which solution truly lives up to the ideal can only be answered by the user themselves, the features and technical requirements proposed here are undeniably essential to any good datacenter infrastructure management software worth considering.